We’ve all inherited a legacy system at some point in our careers, the kind that worked brilliantly for decades until one day it didn’t.

A few months ago, I stood in front of a room of engineers and energy professionals and made this case: “We’re no longer commanding megawatts. We’re trying to herd a million cats… and the cats all have solar panels and batteries.” That got a chuckle from the audience, but they knew what I meant. Because the grid we’ve been running for more than 140 years was never designed for this.

It all started in September 1882 on Pearl Street in lower Manhattan. Thomas Edison flipped the switch on the world’s first commercial central power station, six coal-fired dynamos humming away in a 50-by-100-foot building, lighting up 82 customers and about 400 incandescent lamps. Edison’s direct-current (DC) system was elegant in its simplicity: generate power right where people needed it, keep everything local, and control it tightly from a single point. It felt like the future.

But DC had a hard limit. You couldn’t push it more than a mile or two without massive losses. Enter Nikola Tesla and George Westinghouse with alternating current (AC). Tesla’s polyphase motors and transformers let you crank the voltage way up, ship power hundreds of kilometres with almost no loss, then step it down safely at the other end. AC won the “War of the Currents” in spectacular fashion, and that victory became the blueprint for the entire modern grid.

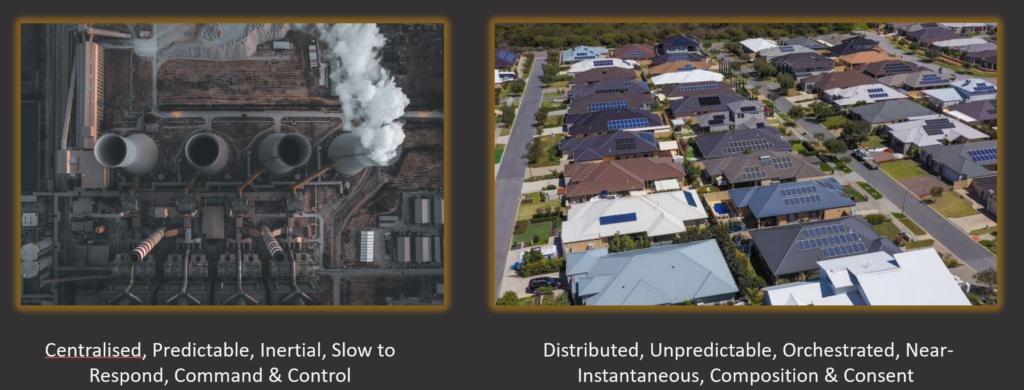

Centralised generation. High-voltage transmission lines. Predictable, inertial rotating machines that could be dispatched with a phone call or a SCADA command. A handful of big plants following the load. Command and control, top to bottom.

For more than a century this paradigm delivered reliable, affordable power to billions of people. It was deterministic. It was slow. And it was engineer-friendly in the old-school sense: you could model the whole system with a few hundred data points and a load-flow study.

Until distributed energy resources (DERs) started appearing on rooftops, in driveways, and inside battery containers—first by the thousands, then by the millions. Suddenly the “load” could generate. The “machines” became unpredictable. And the control room went from managing a dozen big thermal units to trying to orchestrate an exploding fleet of inverters that can change output faster than your code can compile.

That’s the moment we’re living in right now. The old blueprint is cracking under its own success. And if you’re an engineer who thinks in code, this is the most exciting mess you’ll ever get to debug.

A paradigm shift: from command & control to composition & consent

Let’s make this concrete with the contrast that now defines the entire energy transition.

For more than 140 years the grid operated under one clear model: centralised generation from a handful of massive power stations, high-voltage transmission lines carrying power long distances, and predictable, heavy rotating machines that responded slowly to dispatch commands but provided mechanical inertia to the system. Everything flowed in one direction, top-down, command-and-control.

That model delivered reliable power to billions of people. It was deterministic. It was engineer-friendly in the old-school sense: you could model the whole system with a few hundred data points and a load-flow study.

But the physics and economics of renewables have quietly rewritten the rules. Generation is now distributed across millions of small, independent devices, rooftop solar arrays, home batteries, EV chargers, and smart appliances. These devices are less predictable, and they can both consume and supply power. The “load” is no longer passive. The system has become bidirectional and fundamentally orchestrated rather than commanded.

And here’s where the shift gets really interesting, it doesn’t stop at the technical layer.

Beyond the bits and electrons

The moment generation moves from “the utility’s power station” to “your neighbour’s rooftop solar and their Tesla Powerwall,” the very concept of an asset changes.

Utilities no longer “own” the physical megawatts in the same way. The inverter on someone’s garage isn’t theirs to dispatch like a generator they financed and insured. Instead, they own the orchestration platform, the digital layer that negotiates, incentivises, and coordinates those millions of devices.

Asset management teams, trained for decades on maintaining giant rotating machines with 30-year depreciation schedules, now have to rethink what “asset” even means. Is it the physical hardware? Or is it the software-defined capability, the availability of flexible response, the data stream that makes orchestration possible? Entire disciplines, maintenance planning, risk registers, even regulatory reporting, will have to be rewritten while the transition continues.

This is the same transition software engineers lived through when we moved from owning bare-metal servers to orchestrating containers with Kubernetes. The hardware still exists, but the value (and the control) lives in the platform layer that composes it.

As code-literate engineers, we already speak this language. The grid is simply catching up to patterns we’ve been using for years: event-driven architecture, consent-based APIs, and orchestration instead of command-and-control scripts.

The technical change is the easy part. The organisational and conceptual rewiring that flows downstream from it? That’s where the real engineering work, and the real opportunity, begins.

The orchestration challenge: herding a million cats

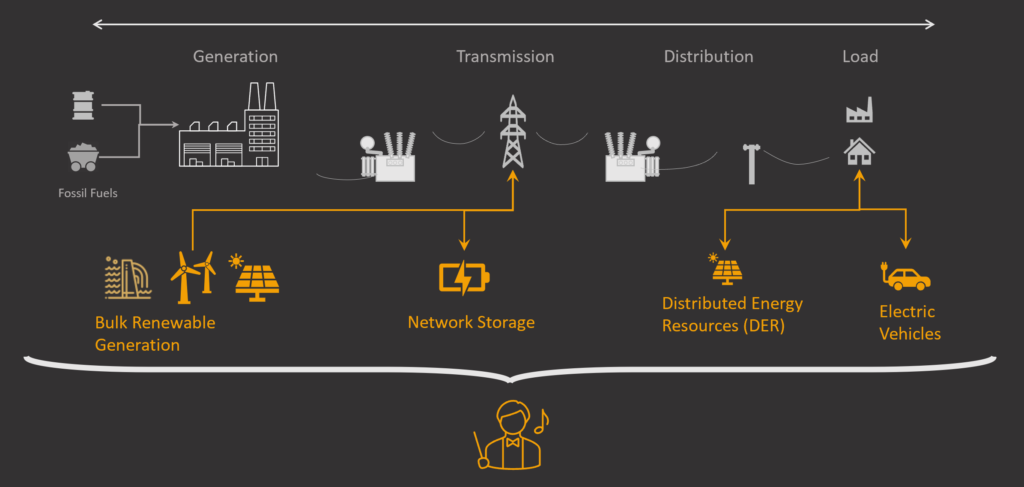

If the paradigm shift feels abstract, let me show you a diagram that makes it more concrete.

Picture the classic one-line diagram of the power system: generation on the left, transmission, distribution, and finally the load on the right. For a century that was a neat, linear flow, a few big power stations pushing electrons downhill to passive customers.

Now zoom in on the modern version. Bulk renewable farms still sit at the generation end, but suddenly there are new injection points: network-scale batteries, millions of distributed energy resources (DERs) hanging off the distribution network, and electric vehicles that can both suck power and spit it back out.

The “load” is no longer a load. It’s a two-way street.

And sitting at the bottom of the picture is a little cartoon conductor in a tuxedo, baton in hand, looking slightly panicked.

That conductor is us. Welcome to the orchestration challeng, herding a million cats that all speak slightly different dialects, change their minds every few seconds, and can decide to inject or withdraw power faster than your monitoring system can refresh.

The headaches fall into two main buckets:

Technical

- Interoperability: Every inverter, battery, EV charger, and smart appliance was built to its own spec. Getting them to play nicely together is like trying to run a microservices architecture where half the services still use SOAP and the other half insist on GraphQL.

- Cybersecurity: Suddenly your attack surface isn’t a handful of well-defended substations. It’s every rooftop in the suburbs.

- Latency: When frequency starts to wobble, you no longer have seconds to react. You have cycles.

Regulatory

- Market Rules: Most markets were designed for slow, predictable thermal plants. They don’t know how to value a device that can change output when a cloud passes overhead.

- Equitability: How do you make sure the customer who can’t afford a battery isn’t subsidising the one who can?

- Value: Who actually gets paid for the flexibility they provide, and how do you measure it without drowning in disputes?

This isn’t just a control-room problem. It’s the same distributed-systems nightmare software engineers have been debugging for twenty years, only now the consequences are measured in blackouts instead of 404 errors.

The good news? We already know the patterns that work: event-driven architectures, open consensus protocols, and platforms that treat every device as an independent agent rather than a dumb endpoint waiting for commands.

The conductor doesn’t need to dictate every note anymore. They just need to set the tempo and let the orchestra improvise within the score.

Which brings us to the next unavoidable reality: the sheer volume of data this new orchestra is about to generate.

A data deluge: from trickle to tsunami

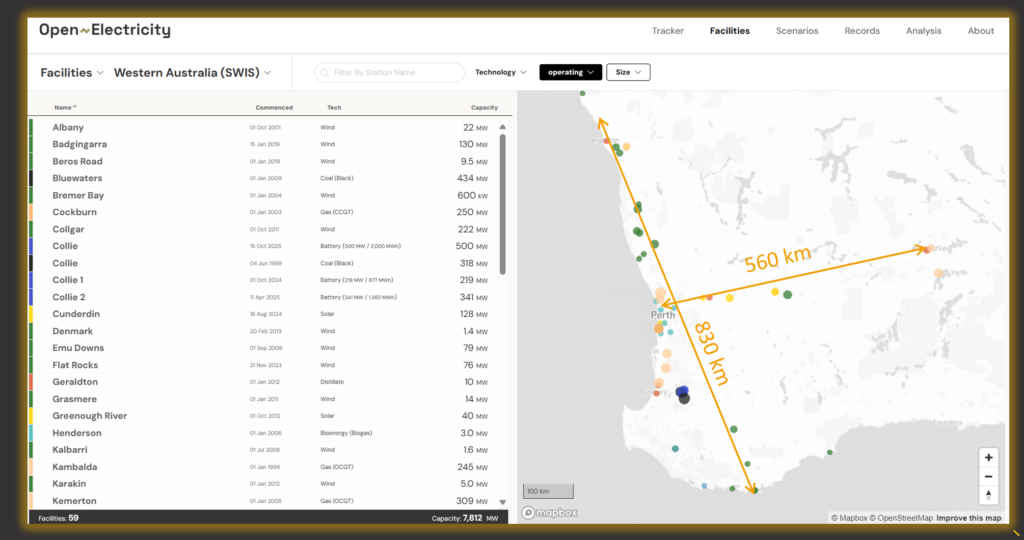

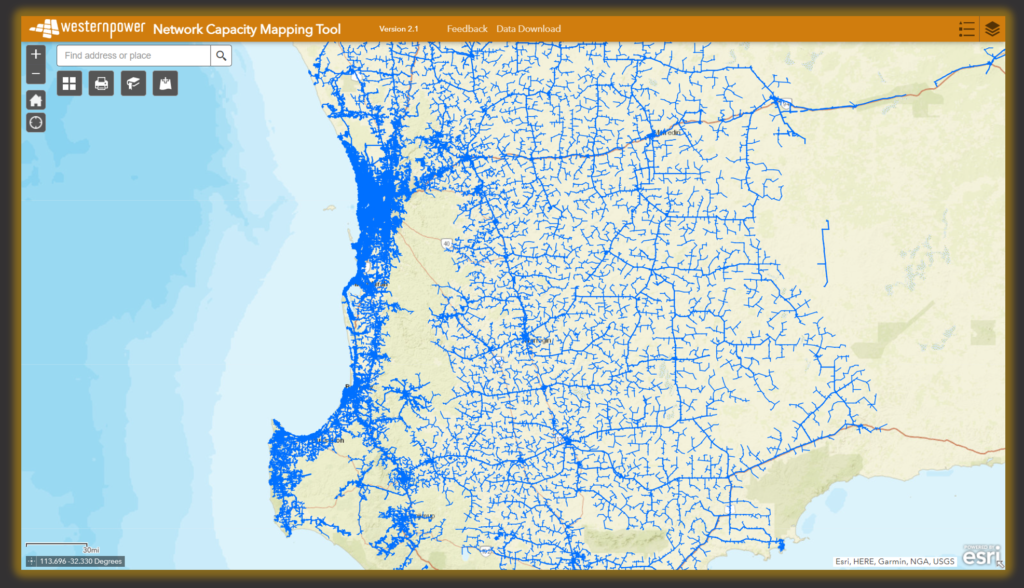

If the orchestration challenge makes you sweat, the data problem will keep you up at night. Two maps tell the whole story.

The first is from Open Electricity: a list of every large generator in Western Australia, 59 facilities in total. A handful of coal plants, some wind farms, a few big solar and battery sites. You could fit the entire list on a single printed page. The map shows neat, coloured dots scattered across the state, with distances measured in hundreds of kilometres. Manageable.

The second map is Western Power’s Network Capacity Mapping Tool. Zoom in anywhere in the south-west and you see a dense, spider-web explosion of blue lines, every single low-voltage feeder, every distribution transformer, every possible connection point. Millions of endpoints. Rooftop solar, home batteries, EV chargers, smart hot-water systems. The “facilities” are no longer 59. They’re every house on every street.

We’ve gone from a trickle of SCADA data points to a tsunami.

Remember when the biggest headache in your engineering life was a 20-tab Excel spreadsheet that crashed every time someone added a new column? That was cute. Today the control room is ingesting real-time telemetry from hundreds of thousands of inverters, voltage, frequency, state-of-charge, weather-corrected forecasts, updating every five minutes.

The data doesn’t just arrive; it floods. The only way through this is a closed-loop decision engine.

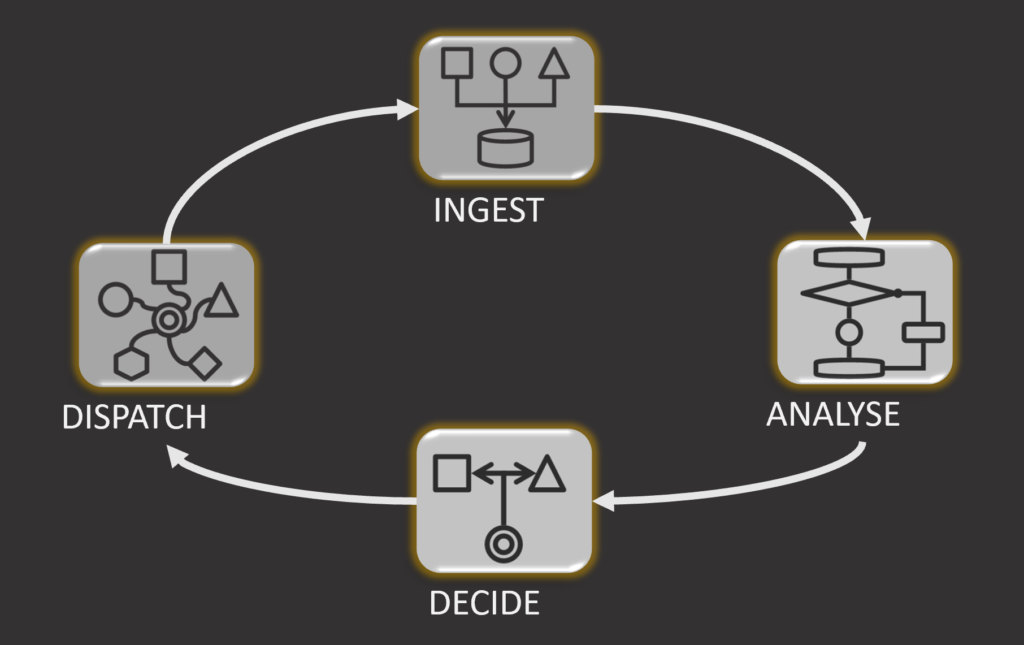

It’s the same pattern we use in every real-time control system we’ve ever built:

- INGEST: pull streams from every device.

- ANALYSE: run the physics, the forecasts, the market signals.

- DECIDE: optimise under constraints (this is where your linear programming or reinforcement-learning code lives).

- DISPATCH: send consent-based signals back out (not blunt commands).

Think of it as the grid’s new operating system kernel, a feedback loop running at grid frequency instead of CPU clock speed.

We already know how to build systems like this. The question is whether we’ll treat the data deluge as a curse or as the richest signal we’ve ever had.

Spoiler: it’s the signal that makes the next three pillars possible.

The path forward: three pillars (and how programmers build them)

So, we’ve stared into the challenge: the paradigm has flipped, the cats are everywhere, and the data is trying to drown us. Now what?

The good news is that the map forward is clear. It’s not a single silver-bullet technology. It’s three mutually reinforcing pillars that any engineer who is part of this transition can contribute to.

Smarter Standards

Open protocols are the universal language we’ve been missing. IEEE 2030.5 and its Australian cousin CSIP-AUS aren’t dusty regulatory documents, they’re the RESTful, event-driven APIs of the energy internet.

They define exactly how a battery, an EV charger, or a smart inverter should announce its capabilities, negotiate dispatch, and confirm it actually did what it promised. Think of them as the HTTP + JSON of the grid: suddenly every device speaks the same dialect, and your orchestration platform stops being a tower of Babel.

As programmers, this is our jam. We’ve spent years fighting integration hell. These standards are the contract that finally lets us treat every DER like a well-behaved microservice.

Modern Markets

We need economic signals that actually match the physics. Old markets were built for slow, big, predictable plants. The new ones must value three things the old system didn’t have to solve for:

- Autonomy (systems are issued controls, but they need to individually enact them)

- Location (a megawatt in the middle of a congested feeder is worth more than one at a remote wind farm)

- Flexibility (the ability to ramp up, ramp down, or even absorb power)

When markets reward these traits, the algorithms we write stop optimising for yesterday’s rules and start composing tomorrow’s grid in real time.

Radical Collaboration

Networks, operators, technology providers, and customers have to stop behaving like separate tribes. This is the hardest pillar, and the most important.

We need shared simulation environments, open-source reference implementations, and joint “orchestration sandboxes” where everyone can test ideas without risking the live grid.

The same way the open-source community-built Linux by arguing in public and shipping code together, we can build the grid’s new operating system.

These three pillars turn the Digital Megawatt from a buzzword into something we can actually ship.

And when they click into place, the conductor in the tuxedo stops panicking. The orchestra starts playing something beautiful.

Because the future of the grid isn’t being commanded. It’s being composed.

Closing thoughts

The future of the grid isn’t going to be commanded from a central control room. It’s going to be composed.

In the image above: a big red power station on the left, a network-scale battery in the middle, and a cheerful suburban house with solar panels and a battery on the right. Three different scales, three different owners, all playing together in the same symphony. That image is the payoff.

The Digital Megawatt isn’t just a fancy label. It’s the critical software layer that turns millions of independent devices into a reliable, flexible, resilient system. It’s the reason the transition to Net Zero can actually be faster, cheaper, and more reliable than anyone dared hope a decade ago.

We’ve spent 140 years perfecting command-and-control. Edison’s central station and Tesla’s AC system gave us a blueprint that served humanity brilliantly. But that blueprint has reached its natural limit.

The new blueprint is being written in code, by engineers like you and me. It runs on open standards instead of proprietary SCADA scripts. It rewards consent and coordination instead of blind obedience. And it treats every rooftop inverter, every EV charger, and every home battery as a first-class citizen in the orchestra.

The cats aren’t going away. But we finally have the baton, the score, and the language to conduct something extraordinary.

Because the grid of the future isn’t going to build itself. It’s going to be programmed.

And the best part? The people best qualified to write that code are the ones reading this right now.