The “Spreadsheet of Doom”

If you have been working in any engineering field for more than a week, you will recognise this situation. We have all been there at some point.

Sometimes, it starts with you. You did this to yourself. You created it to begin with. It was born as a “quick” and “temporary” solution to a pressing business problem. One year later, it’s still here.

Some other times, it starts somewhere else. With a colleague leaving. The smart one. The one with all the ideas and a knack for building “solutions”. She has been developing and operating this until the day she left. Now, it’s yours.

Either way, here you are. You are staring at a 20-tab, 83 MB, formula-rich, monster of an Excel workbook. It has tens of thousands of rows worth of data. Your colleagues know it is critical to perform some business functions and yet they call it, “the spreadsheet of doom”. They seem to take issue with the fact that it takes a couple of minutes to load, and then some every time data is refreshed and the calculations have to run.

The cells have colour coding. You’re not quite sure what each colour means any more. Apparently triggered by some obscure conditional formatting that made sense a few months ago. The tabs and columns have what looks like good names, just not good enough to be obvious. “What is the ‘References’ tab for anyway?” you think to yourself.

In any case, why are you even looking at the spreadsheet now? Easy. You have been asked to change it. You have been asked to: “make some minor modifications” to accommodate for some new business need that came up last week.

You understand the business need well enough. You also have a rough idea of what the outcome should look like. The question is, can you change the spreadsheet in all the right places? Can you be sure you didn’t miss anything? Are all the outputs correctly updated? Are you aware of all the dependencies other colleagues might have on this workbook?

The successful answer to these questions heavily depends on how this “tool” was born.

The way it starts

There is nothing wrong with facing a challenge at work and figuring out a solution that serves this need. The faster, the better. The business needs it. Now.

If you implemented it using an architecture that favoured good organisation and discipline, you will probably be able to make the changes in the right spots and be done with it.

If, on the other hand, this spreadsheet grew “organically”, if it looks more like spaghetti than a bento box, most likely you are in for a world of pain. You will poke around a little bit, change some known inputs and watch what changes. Essentially, you are using your brain’s pattern matching and inductive abilities to reconstruct a mental model of how this thing works.

As deadlines approach and frustration grows, you will eventually curse your colleague (or yourself as applicable). Why didn’t she (you) leave more detailed comments or instructions? If only she (you) had documented things properly, you wouldn’t be in this predicament right now.

You then think, “I shouldn’t blame people, this is about using the wrong tools for this job”. You will then curse Excel. Why do they make it so easy to do the wrong thing? “Microsoft … bleh”. Why can’t I just tell the AI what I want this thing to do and it does all the right changes for me?

The truth is the tools you use, nor the people that use them are the culprits. The issue is also not lack of documentation or better documentation. Yes, documentation is a good practice, but it is not the reason or a substitute for good design.

The problem is one of structure. It is about the way we think about problems. It is about how we build things.

Almost any tool, wielded by someone with the right frame of mind, can yield superior results. Even better than the outcomes of other more sophisticated or “fancier” alternatives.

The point I will make in this article is this: The way we think about the problem trumps any other limitation of finding a good solution.

To understand this structured way of thinking, we need to look at how we mapped out problems before computers even existed.

Algorithms before Computers

We tend to think of algorithms as creatures of the digital age. We imagine entities born in server farms and raised on silicon. But if you strip away the syntax and the semicolons, an algorithm is simply a sequence of instructions to solve a problem. Engineers have been writing those for a lot longer than computers have existed.

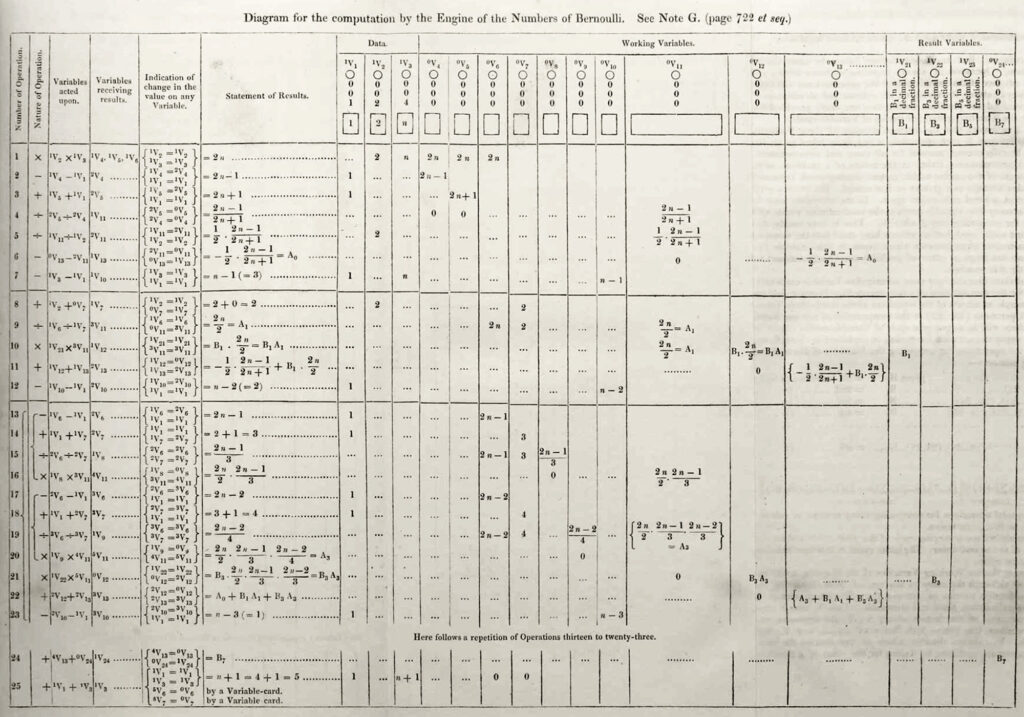

Consider the mid-nineteenth century. Long before anyone had conceived of a microprocessor, the mathematician Ada Lovelace was thinking about how to instruct a machine to perform complex work. She was studying Charles Babbage’s proposed Analytical Engine, a massive, steam-powered mechanical computer made of brass and gears. Babbage was focused on the hardware. Lovelace, however, focused on the logic.

In 1843, she published a translation of an article about the engine and added her own extensive notes. In what is now famously known as “Note G”, she detailed a rigorous, step-by-step method for the mechanical engine to calculate Bernoulli numbers.

She realised that the machine could manipulate symbols according to rules, not just crunch numbers. This was the first published algorithm explicitly intended for implementation on a machine. It was pure structural thinking applied to physical cogs and levers.

Yet, the visual tools we use today to map out this kind of logic did not come from early mathematicians. They came from the factory floor.

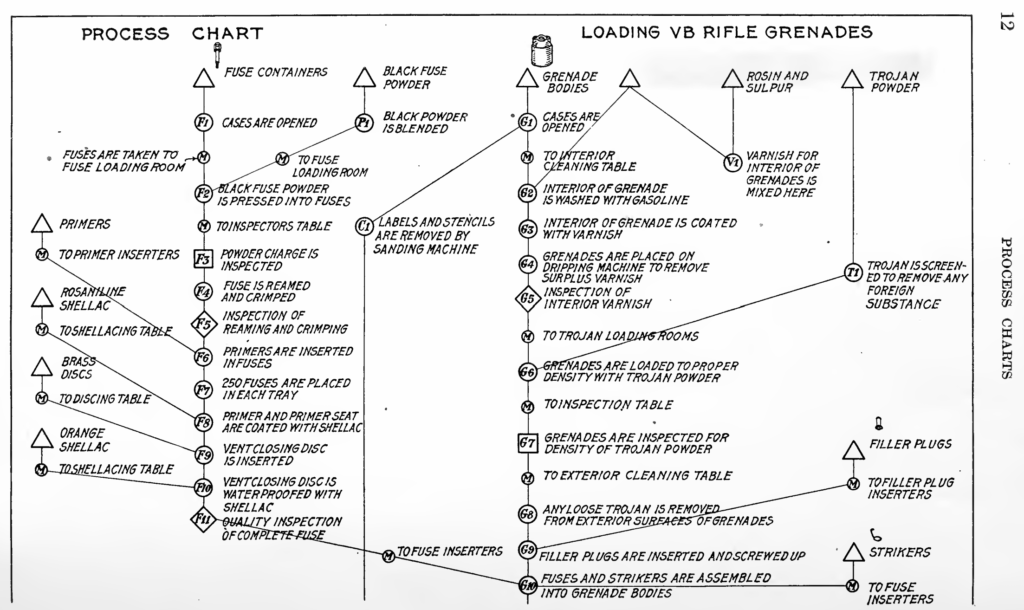

It is 1921. The American Society of Mechanical Engineers (ASME) is holding its annual meeting. Frank and Lillian Gilbreth, a husband-and-wife team of industrial engineers, are presenting a paper titled “Process Charts: First Steps in Finding the One Best Way to Do Work.”

The Gilbreths were obsessed with efficiency. They studied bricklayers, surgeons, and factory workers. They broke down every complex human action into 18 fundamental elemental motions they called “Therbligs”.

To map these motions, they invented a graphical language. A small circle for an operation, a square for an inspection, a triangle for storage. They linked these symbols with arrows to show the flow of material and information. They were not trying to program a computer. They were trying to debug a process.

Fast forward to 1947. Herman Goldstine and John von Neumann are working on the EDVAC, one of the earliest electronic computers. They needed a way to map out the decision points before wiring logic into the machine. They looked around for a solution and found the Gilbreths’ process charts. Von Neumann and Goldstine adapted those industrial symbols for their new digital realm.

This is a profound realisation for us as engineers. When you sketch a flowchart to plan a Python script, you are using a tool designed to optimise industrial engineering processes.

The “Black Box” Mental Model

This industrial heritage highlights a crucial shift in perspective. It moves us away from intuitive, organic problem solving and towards the “Black Box” mental model.

In traditional engineering, you might look at a complex system and try to understand everything all at once. Algorithmic thinking forces you to be systematic. You define the Inputs, you define the Transformation, and you define the Outputs. The inside of the box does not matter yet.

This approach relies on a few core pillars. First is Deterministic Logic. If A happens, then B must happen. Always. There is no room for “maybe” or “sometimes” in a well-designed system.

Second is Decomposition. You cannot debug an entire car engine at once. You must break it down and debug the fuel injection system separately.

Finally, there is Abstraction. Knowing that a valve opens is critical for the system logic. Knowing exactly how the solenoid moves the internal pin is an irrelevant detail at the system level. You abstract the detail away to focus on the flow.

Functional Engineering Connection

When you take this Black Box model to its logical conclusion, you arrive at a concept software developers call Functional Programming. This paradigm is incredibly useful for engineers to understand because it mirrors the way we design reliable, failsafe physical systems.

Functional programming relies heavily on “Pure Functions”. A pure function operates on two strict rules. First, if you give it the same inputs, it must always return the exact same output. Second, it must produce No Side Effects. It cannot secretly change a variable somewhere else in the program while it runs.

Think about the “Spreadsheet of Doom” from earlier. The reason it breaks is almost always because of unintended side effects. You change a cell on Tab 1, and it secretly alters a hidden reference on Tab 14, which consequently breaks a chart on Tab 3. The state of the system is tangled and unpredictable.

A good engineering design, just like a pure function, isolates its logic. If you design a standalone pump skid, its operation should depend only on the fluid coming in and the power supplied to it. It should not accidentally affect the control logic of a completely different system across the plant.

Another core functional programming concept is Immutability. In functional code, once data is created, it cannot be changed. If you want to update it, you do not overwrite it. You create a brand new copy with the changes applied.

This is exactly how we should treat our engineering data. Your raw survey data or your initial laboratory test results are immutable. You never overwrite the original file. You take the raw data as an input, run it through your “Black Box” calculation tool, and generate a new output file.

By applying these functional programming concepts to your general workflow, you stop building fragile spreadsheets and start building predictable, testable, and robust engineering pipelines.

Coding Concepts vs. Engineering Reality

Learning to write code forces you to exercise this “Black Box” muscle in a very specific way. A computer does not understand “about 50ish”. It does not possess intuition. This relentless demand for strict logic changes how you approach physical problems.

Let us look at how the three pillars of algorithmic thinking map directly to the engineering challenges we face every day, and how they help cure the “Spreadsheet of Doom” syndrome.

1. Deterministic Logic

In programming, logic must be deterministic. If you run a script with the exact same inputs, it must yield the exact same outputs. A program does not guess. If a variable is undefined, the code simply stops.

In the physical world, we often rely on implicit knowledge. We have all seen plants where an operator knows to “turn the valve until the pipe hums a certain way”. We see spreadsheets where you just have to “know” not to sort column C because it breaks the formulas in column D. This is non-deterministic engineering. It relies on hidden human memory and luck.

When you learn to code, you build an intolerance for this ambiguity. You start designing engineering processes that are explicitly defined. You replace the operator’s “rule of thumb” with a strict sensor-driven control loop. You replace the fragile spreadsheet with a script that validates every piece of input data before it runs. You learn to build systems that produce the exact same result regardless of who is sitting at the desk or standing on the factory floor.

I’m not naive and I know the real world not always lends itself to exact and precise rules. Very early in my career I learned about “fudge factors” used by the best engineers I’ve ever met. However, even in these cases, these factors and tolerances can be quantified, encoded and considered as part of the logic. It’s not about not having these factors, it’s about knowing where they go, how big they are, what they affect and how sensitive the final outcomes are to variations in the inputs. The Six Sigma toolbox has a wide range of tools to do precisely this.

2. Decomposition

A novice programmer will often try to write a single, massive script that does everything at once. It reads the file, cleans the data, runs the math, and plots the chart all in one giant block. When it breaks, finding the error is nearly impossible. Experienced developers use decomposition. They write small, isolated, highly testable functions for each specific task.

This concept of modularity is not alien to us. If you are building a processing plant, you do not design it as one solid, inseparable block of steel and concrete. You break it down into the power supply, the pumping stations, the heat exchangers, and the control systems. You test a pump on a test bench before you ever connect it to the main piping network. You do this so that if a bearing fails later, you only have to fix the pump. You do not have to dismantle the entire facility.

Yet, when we transition from physical hardware to digital tools or workflows, we often abandon this wisdom.

This is exactly why the “Spreadsheet of Doom” is so painful. It is a digital monolith. The data entry, the business logic, and the presentation layer are all tangled together in a dense web of cell references. The human brain can only hold a handful of variables in active memory at once. When you intertwine twenty tabs of unstructured calculations, you far exceed that cognitive limit.

Algorithmic thinking trains you to physically decouple these elements in your digital work, just as you would in the physical world. You realise that your raw data should live in a simple CSV file or a database. Your calculations should happen in an isolated script or a dedicated processing tool. Your presentation should be a completely separate dashboard or report.

By decomposing the engineering workflow, you can “unit test” your calculations independently of your formatting. You ensure that updating a chart colour will never accidentally break your structural load calculations. You stop building fragile monoliths and start building robust, modular solutions.

3. Abstraction

In software, abstraction is how we survive complexity. When a programmer needs to calculate the sine of an angle, they do not write a complex Taylor series expansion from scratch. They import a math library and call a simple function. They trust the interface. They abstract away the internal math so they can focus on the higher-level program.

Engineers already do this with physical hardware. When you specify a gearbox for a conveyor system, you care about the input speed, the output torque, and the mounting footprint. You do not re-calculate the involute profile of every gear tooth inside the casing. You abstract the internal complexity and treat the gearbox as a black box with known inputs and outputs.

However, we often forget this principle when dealing with data or processes. We let irrelevant details clutter our decision-making. We build tools that require the user to understand the entire underlying physics model just to get a simple budget estimate.

Coding trains you to build clean interfaces. You learn to hide the messy calculation logic behind a simple user prompt. You learn to respect the boundary between the inner workings of a tool and the person using it.

Abstraction is sometimes one of the hardest aspects of software design to converge on. Every mind might have a slightly different view and understanding of the problem. This is often enough to end up in completely disconnected territories when it comes to abstracting a concrete concept that needs to be implemented in software. Add to this the various steps of hand-over that ocurr during the normal lifecycle of a project and things can to sideways very quiclky.

If you are interested in the concept of abstraction, I would recommend reading Bret Victor’s excellent article and watch his YouTube video on “The Ladder of Abstraction“.

Closing Thoughts

The value of learning to program is not that you will become a software developer. You are a mechanical, civil, or electrical engineer. You deal with the physical world.

But when you learn to code, you upgrade your brain’s operating system. You stop seeing problems as isolated tasks. You start seeing them as systems. You stop solving the same problem twice.

This creates a new kind of professional. The Code-Literate Engineer.

This is the engineer who understands the physical constraints of a bridge but also possesses the algorithmic mindset to automate the design process. In a world that is becoming increasingly digitised, the engineer who can only do the math is at a disadvantage. The engineer who can automate the math, validate the inputs, and handle the exceptions is the one who will lead the project.

So, open that text editor. Write a script. Break a big problem into small functions. You aren’t just writing code. You are sharpening the oldest tool in the engineer’s toolbox. You are learning to think.